What and How to Identify Thin Duplicate Content, Google SEO Penalty Fix

Thin content can damage your site's reputation in the eyes of search engines, google algorithms and visitors on every important content website, especially for a business that depends on organic traffic and conversion-focused content seo. Yet, the problem may only become apparent once your site has been slapped with a manual penalty and warning from google, which for many businesses feels like a thin content penalty and can trigger further manual penalties and loss of traffic and visibility in search results.

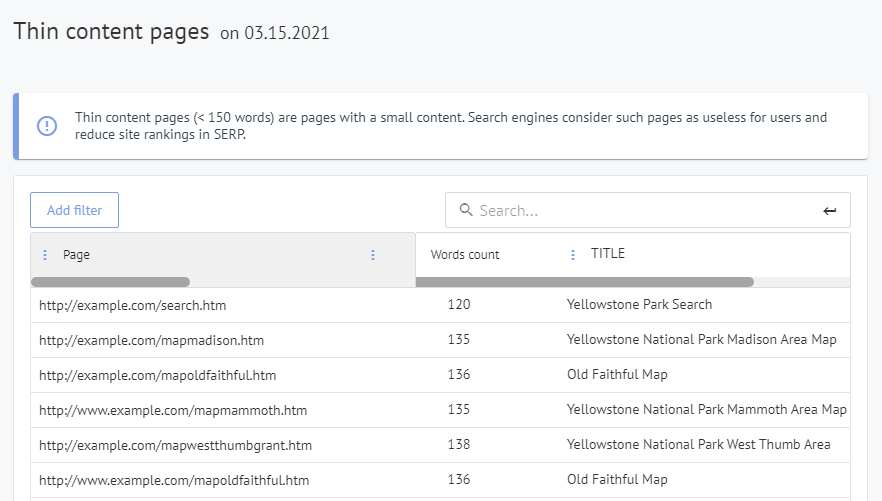

For this reason, it's always best to strike first and identify issues quickly using professional seo tools and a structured audit process; this is where Labrika can help thanks to our SEO audit and clear data on thin content issues, duplicate pages and low quality content based on real engagement metrics, behavioural signals and technical analysis. Our SEO audit offers you a comprehensive, automated assessment and a

list of thin content pages and diagnostics pulled from google search console and internal analysis reports

on your site, so you can see which pages low in word count, pages little real value or duplicated are causing poor page ranking, bad engagement and a negative user experience, and understand the business impact on leads, sales, brand trust and long-term marketing performance.

Understanding what is thin content in the context of google quality evaluations helps your team determine which thin content pages fall below the required content quality threshold and which pages simply have a shorter length but still provide unique value users and meet the expected search intent of your target audience.

What are thin content pages and how to improve them for seo performance and user experience?

Thin content is typically easy to identify as it has none of the information a user seeks and does not match the search intent for the query in google or other search engines.

- No relevant information and authoritative sources, studies or data that build trust and demonstrate real expertise.

- No content that satisfies the user's search query or answers questions the user searching for the topic expects the page to address.

- No apparent knowledge of, or expertise in, the subject matter from an expert author, company or brand.

- No actionable material or relevant and helpful links to related resources, video, image, reviews or detailed guides.

Basically, thin content delivers zero value to the users of a site. The user, therefore, has no choice but to click off the page and search again for the information. This leads to a high bounce rate on the page and a negative user experience, and from an seo perspective it sends a strong negative signal to google that the thin page does not meet user needs and that thin content pages on a content site can affect overall page seo and engagement.

Why thin content is damaging to your website?

Thin content can have a negative effect on your ranking in the search engines, and your brand's image. Users will be unlikely to click on any call to action or to navigate to other parts of your site. They may also bounce off the page, which Google views as a negative indicator. Low quality content often leads to lower conversion rates, fewer leads and overall worse performance in search results, as google relies on such engagement metrics to determine whether pages are thin content or deliver value.

In the last 10 years, Google has taken a lot of action to improve its algorithm and prioritize high user satisfaction. Multiple core updates and publicly available webmaster guidelines show that google aims to demote pages that manipulate rankings with doorway pages, keyword stuffing or thin affiliate pages.

Their system has now developed into a highly intelligent 'rubbish content detector'. It’s best to avoid having your site considered 'low value' as it will not appear at the top of the SERPs and to avoid thin content patterns that make google doubt the relevance of your pages. Or, even worse, you may receive a manual action from Google. If you receive this warning your site will not appear in the search engines at all until the issue is fixed, because such manual actions in google search console can severely limit visibility.

For a commercial content site, this situation affects more than rankings, because poor thin content clusters across product pages, category pages and blog articles usually cause drops in traffic, higher exit rates and lost revenue opportunities, and management teams often underestimate how quickly these effects can spread to multiple categories and urls.

9 common sources of thin content pages

Thin content is an on-page SEO issue that is frequently triggered by: It usually indicates underlying content seo and technical optimization problems that affect the whole content site and can confuse both visitors and crawling bots.

- Doorway pages created only for specific keywords or locations, for instance local doorway pages that send users to the same thin destination.

- Affiliate pages where the main task is to push clicks on affiliate links instead of providing independent reviews or helpful information.

- Scraped content copied or scraped from other sources, competitor sites or media without adding unique insights.

- Duplicate content across multiple urls, canonical variations or language versions, plus near duplicate templates and other duplicates generated by filters.

- Category pages that contain only auto-generated lists, no substantial text and little context around the products or articles.

- Automatically generated pages created by scripts, internal search parameters or tag archives that are not useful for visitors.

- Poor quality copywriting that lacks depth, contains spelling errors or was automatically generated without expert input.

- Pages with not enough text 9 to explain the topic, answer questions or compare options in a way that helps readers make a decision.

- Keyword-stuffed pages where keyword stuffing and over-optimization replace natural writing and harm trust.

Google does recognize that certain pages are expected to have thin content, such as: These utility pages still need a clear design and correct information but are evaluated differently by google.

- Contact Us pages that simply provide company contact details and address.

- Login pages required for user accounts and services.

Short content is not necessarily thin content

We are very clear not to mention a minimum word count. This is because genuine value can sometimes be delivered in a few hundred words and doesn't always require 2000 plus words. Instead of focusing on raw word count, focus on whether the content site offers a complete answer, covers related subtopics and satisfies search intent.

There is no one size fits all, and that is why we say that you normally know thin content when you see it. When you review pages, ask yourself what is thin content in your niche and whether each page would impress a real customer.

Google's algorithm has become highly intelligent since the Panda update in 2011, which focused on poor-quality pages. Updates such as Panda and BERT evaluate page seo signals, on-page structure, tags, metadata such as title tags and meta description and behavioural data to determine whether pages are thin content or genuinely useful.

New releases, such as BERT, are very skilled at identifying poor content and recognizing valid, well-written copy. This means google is constantly identifying patterns where pages look automatically generated, scraped or thin content, and rewarding comprehensive, well structured content.

How to fix thin content SEO problems?

The solution is simple: add value! Google wants to present users with pages that fulfil their query intent. This is why they have been the number one search engine for so long. This means you must either remove/hide (noindex) offending pages or rewrite them so that they add value to the user. Although a challenge for a site with thousands of pages, it is vital to maintain or increase your position in the SERPs. Practical steps include expanding content with expert insights, adding relevant images or video, improving design and internal links, updating outdated data and ensuring each page has a clear purpose. In some instances, setting noindex tags, adding canonical links or using redirect rules for obsolete urls is the best solution to protect overall seo performance in google.

In practice, many teams use Labrika to plan steps, prioritize thin content pages by business value, clarify what resources are required and ensure each improvement task is completed, tracked and reported in a structured, repeatable process that supports long-term optimization work.

Commercial impact of resolving low value pages

When thin content is replaced with detailed, original and relevant information, engagement metrics usually improve quickly, visitors stay longer, read more articles, view more product pages and are more likely to complete forms, request services or contact your company, which creates a positive signal for algorithms and for human reviewers.

By improving the thin content that affects key customer journeys, you can fix thin content driven obstacles in the funnel, support other marketing activities such as ads and email campaigns, and help your brand stand out against any competitor that still relies on copied or automatically generated content.

How Labrika can help you to identify thin content pages

A data-driven approach is useful for identifying thin content pages, and saving you the energy of trawling through every page. Start by performing a site audit in Labrika's dashboard. You can then extract the list of URLs with thin content and analyze key metrics so you can narrow down where to start. Combine Labrika's reports with google search console coverage and performance data to understand which urls are indexed, which pages are excluded and where thin content issues affect visibility:

- How much revenue (if any) does the page generate from ecommerce sales, leads or other conversions?

- Number of visitors to the page from organic google search results and other channels.

- Duration of typical visits, which can signal whether the content has enough depth.

- Does the user navigate to other pages on your site, following internal links to related topics?

- What external backlinks exist to the page, and whether these backlinks come from trusted domains or low-quality sources.

- How well the page ranks for the keywords you have assigned to it, compared with competitor pages and industry benchmarks.

After this, you can then prioritize the most important pages to improve and to fix thin content issues first.

Using these reports, teams can determine the most efficient method for each cluster of thin content, decide whether to update, consolidate or remove individual urls, and ensure that high-value sections such as pricing pages, core service categories and home page content are protected from accidental changes that could negatively affect rankings.

How to improve the problem pages you've identified

Review and rework problem pages

That might mean rewriting them to create more valuable content. Or, adding headings and sub-headings. Generally, ensure the content matches the expectations of the keywords for the page. Review title tags, meta description, headings, internal links, images and overall page seo so that the structure helps readers and search engines understand the topic.

When creating content for these pages, focus on user experience rather than simply increasing length; include unique insights from an expert author, add clear explanations that answer questions your audience actually asks, compare similar options where helpful, and ensure the design, loading speed and internal navigation support readers on different devices and locations.

Consolidate pages where possible

If several pages address the same keywords or topics, then move or amalgamate content. Typically, we move content from the lowest-performing pages to the best-performing ones. This approach helps reduce duplicate content, avoid cannibalization and create a single, more comprehensive article that can stand out in google search results.

Where appropriate, you can combine several pages thin on detail into one complete version that contains all essential information, redirects older urls, preserves link equity and provides a clearer structure for both visitors and crawl bots.

Redirect pages to better-performing ones.

Pages that already have some minor link juice can be redirected. This may be a better solution in some cases, especially where content is near-duplicate or cannot really be improved. Use 301 redirects or canonical tags so google clearly understands which version is original and does not treat other urls as duplicate content.

Examples of thin content pages you should audit

Before reviewing detailed examples, align within your team on what is thin content for your audience and industry so everyone uses the same term and criteria.

- Product pages with only a few words of text, no unique description, no reviews and no comparison table, which look as thin content to google.

- Blog articles that simply repeat news from other sources, use scraped or copied text and do not add expert analysis or original insights.

- Automatically generated local pages that just change the city name while the rest of the content is duplicate, leading to doorway pages signals and possible manual actions.

- Thin content pages built only for ads or affiliate clicks, with aggressive banners, little information and no clear benefit for visitors.

- Utility pages like a privacy policy or terms of service that are copied templates; these are expected to be short but should still be accurate and not indexable duplicates across multiple domains.

- Pricing or service pages that provide almost no detail about services, features, process or benefits and simply request that visitors contact the company.

Each example shows how thin content issues can appear in many categories of a content site, from the home page to individual service pages, and why google may reduce visibility or apply a penalty related to thin pages if such patterns affect a significant portion of the site and overall business performance.

Managing duplicate content with Labrika

Labrika reports highlight duplicate content clusters, including near duplicate titles, duplicate meta description text and duplicate blocks of content across multiple urls. Use these insights to determine which version should stay indexable, which pages should redirect and where you can combine duplicates into a single, stronger page that better serves users and search engines.

In summary, get help by using SEO professionals or data-led SEO software such as Labrika’s site audit. An experienced agency or internal team can design a content strategy focused on high value articles, clear targets and ongoing optimization.

Larger sites may present too much of a challenge to be easily and quickly rectified. Using software to identify thin content pages can save you time and money in the long run. For a very large content site, automation, templates, consistent writing guidelines and regular audits are essential to keep duplicate content under control and prevent thin content issues from reappearing.

Start your free trial now

Start a free content audit with Labrika to learn how your existing text, product pages and articles perform in google, which urls are affected by thin content issues or duplicate content and which steps will have the most significant impact.

During the free trial, you can check thin content pages, see detailed reports on low quality content, understand search intent gaps, and quickly share findings with your team so they can plan further writing, development and internal optimization tasks.

Features

Labrika’s features include comprehensive seo audit reports, thin content detection, duplicate content analysis, on-page recommendations, internal link analysis and technical checks that support better crawl efficiency and index management.

- Automated crawling, analysis and reports that show thin content pages, doorway pages, affiliate pages and pages low in value, helping you prioritize effort based on potential business benefits.

- Page-level data on search results performance, click behaviour, bounce rates and engagement so you can determine whether pages are thin content or simply short but valuable.

- Tools for keyword research support, on-page tags review, canonical and redirect checks, and internal link structure analysis to ensure that thin content does not spread across similar templates or categories.

- Clear dashboards for content quality management that help your team start, track and complete optimization projects, instead of making isolated changes that don’t address the core problem.

- Content-focused insights that highlight pages little substance, pieces that lack depth or context, and sections that might be seen as thin content by algorithms, together with practical suggestions on structure, length and topics to cover.

- Technical and content reports that connect duplicate content patterns, automatically generated pages and scraped material, giving you a complete view of risks that could penalize visibility or trust.

Updated on April 15, 2026