Fix Common Keyword Density and SEO Mistakes

This article explains how Labrika analyzes keyword density for on-page seo, helps search engines understand your content, and shows which common mistakes prevent pages from reaching the top results.

What does this message mean in the content optimizer?

"It was not possible to calculate the recommendations because not all keywords have a common density interval. It is necessary to remove some of the keywords of this page and update the recommendations"? This affects seo metric tracking for the page.

In Labrika's content optimizer this message indicates how the system evaluates keyword usage against competitors, using a keyword density tool and other seo tools to measure keyword frequency, detect keyword stuffing, and protect page seo while keeping quality content and user experience in balance.

Essentially, all keywords have a certain density on landing pages, and for each target keyword Labrika compares the number keyword mentions with the total number words on competitor pages to determine an ideal range for safe content optimization.

There are several sites in the TOP10 of the SERPs that we must discard from our recommendations as they may be there due to other factors, for example; Wikipedia or YouTube. These results often include an online article, an overview site, or a product listing where off-page factors and brand authority dominate, so seo experts should review the context before relying on them for keyword density benchmarks.

For example, say we are writing an article for a blog and within the TOP10 for the keywords, we are using, one of the pages is from YouTube with only 50 words. It makes no sense to include this page in our recommendations. If we were to replicate this type of page, with only 50 words, we would never see the page in the TOP10 because the video is ranked by other factors, and is added to the TOP10 of the SERPs to give variety to the content offered. This type of mixed result set matters because search engines understand and rank video differently, and focusing only on such pages would not help your website rank higher or grow organic traffic for that specific keyword.

And there's a second problem. Imagine we are optimizing our page for the extreme value of the keyword density. If, after one day, the site that had this extreme keyword density value drops out of the TOP10, the range of word density for sites in the TOP10 is reduced. Leading to the system yet again asking you to redo your page. This creates unstable seo and can force you into repeated on-page optimization cycles.

Therefore, it is much easier to remove the extreme values from our recommendations. Doing so is a good practice in data-driven seo because it allows the algorithm to focus on the most relevant keywords content and provides more reliable recommendations.

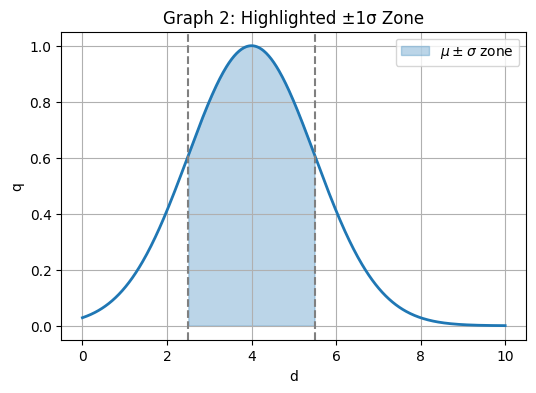

Here's, a simplified graph that shows how many sites in the TOP10 contain what density of this word. This visual review shows how keyword density values are distributed across competitors and which segment of that range is safest for seo.

When we need to place several key phrases on one page, we must find the overall confidence interval for each word within these key phrases, while still matching search intent and audience expectations.

For example, if we want to place the phrases "mobile phones" and "mobile traffic" on one page, then we need to find the overall confidence interval for the word "mobile", which is included in both key phrases. You should also consider synonyms and variations of these phrases so that the text reads naturally rather than being forced.

If the text for these words on the pages in the TOP10 are similar, then the overall confidence interval will be found, and Labrika can calculate keyword density recommendations more precisely:

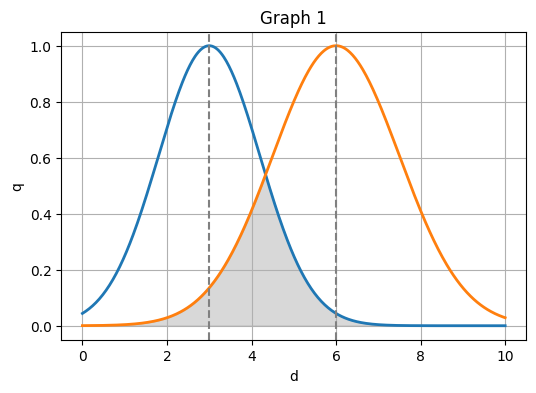

If the text for these words on the pages in the TOP10 are very different, then there will be no overall confidence interval, in this situation the keyword density tool will intentionally avoid giving strict numeric targets:

In this case, it is undesirable to use the average value - since this density will not be enough for one key-phrase and will be too high for another key-phrase. Meaning there's a risk of being lowered in the SERP for over-optimization. From an seo perspective it is better to work within the confidence interval for each key phrase instead of chasing a single average density value.

Remember that we are calculating what density we need to use for a word because this word is part of several key phrases. And if the density doesn't fit, then one of the phrases on the landing page will have less chance of getting into the TOP10 of the SERP. This reminder underlines how sensitive seo can be to even small changes in keyword density for shared terms across several phrases.

There are several reasons that can lead to this situation:

- The content on the landing pages for different key phrases in this group where the error occurred is different. And you should separate these key phrases across different landing pages. This separation helps search engines understand each page seo focus and improves overall content structure.

- You manually excluded competitors that were very similar for key-phrases on this page and because of this, the link that indicated that these key phrases can be placed on the same landing page has disappeared. You can try to include those competitors again that were previously excluded. This is especially important for business websites with many services and products, where seo strategy, customer journeys, and internal links depend on clearly defined landing pages.

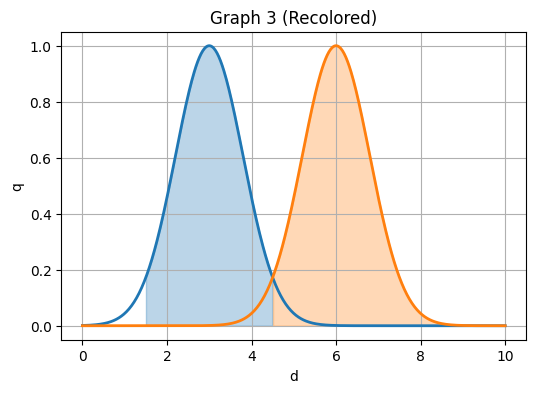

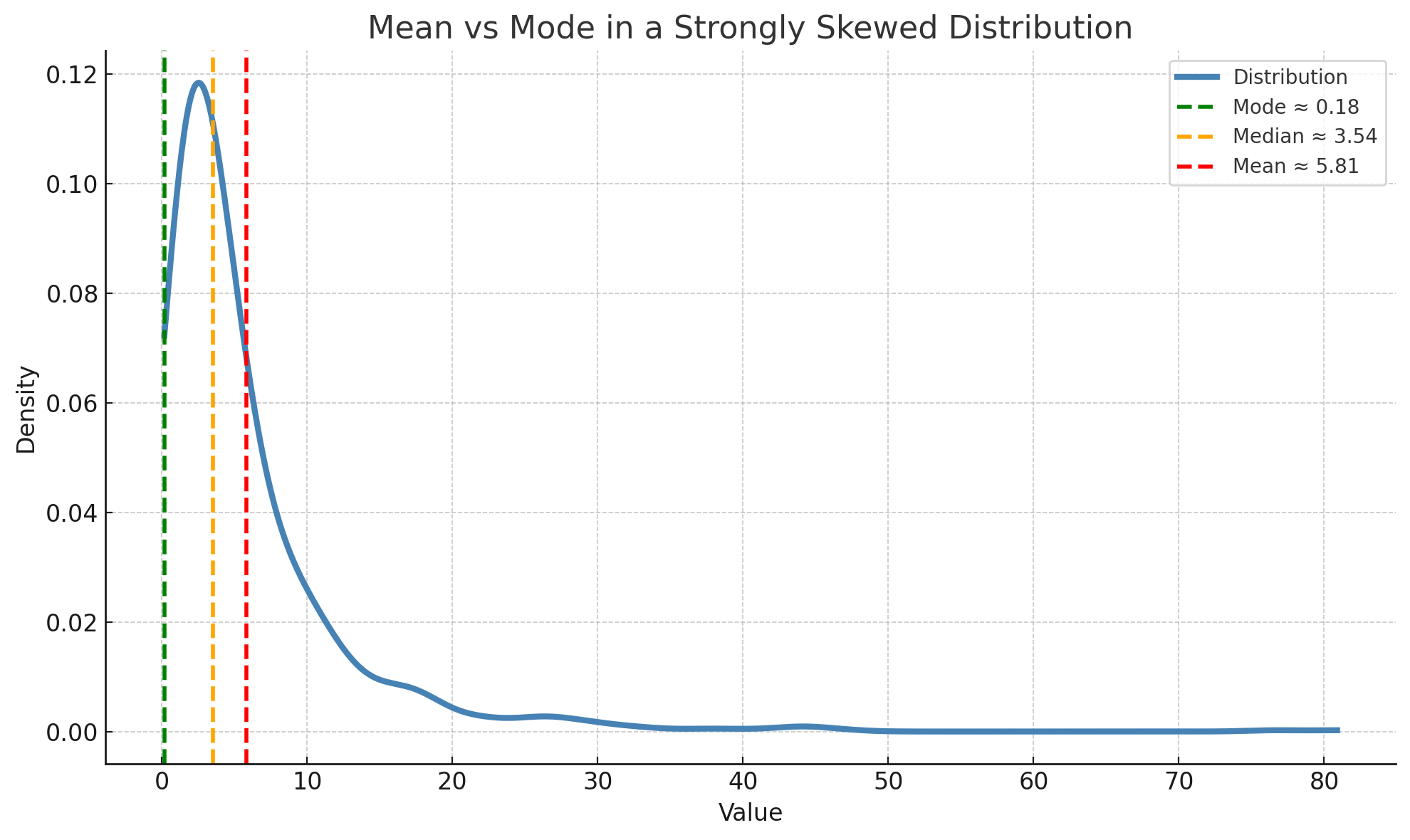

Finally, an explanation on why you should never use averages. Below shows a graph of the distribution of keyword density on sites in the TOP10 that are often found. In practice relying on such averages is a common seo error because it hides how different groups of pages use this keyword.

To provide variety, the search engine often adds sites with different types of content in order to best satisfy the user. For example, an overview site may be added to the search results alongside online stores.

This is often less noticeable as a user but is very different when analyzing the numbers to get an average. Let's say there are 9 blog sites in the search results and 1 site with a huge price list that has a high keyword density. This will shift the averages away from the majority group, skewing the results.

It is also important to note that a page doesn't always need to be made exactly in accordance with what we see in the TOP10.

Firstly, there are categories of sites that lag behind others in terms of the quality of features and content offered. In cases like this, it is more useful to improve the site rather than follow what others have done, in order to get ahead of the competition.

Secondly, if there is a lot of competition, doing something different may be the only thing to set your site apart. However, at the same time, you must clearly understand how the site should be produced, to give you a chance of reaching that TOP10 in Google.

Common keyword density mistakes in seo

Understanding how keyword density interacts with seo helps avoid several common mistakes that can damage rankings and readability.

- Focusing on a single percentage for keyword density across every page, instead of working within a range derived from competitors, is one of the most common mistakes and can lead to unnatural text and weaker seo results.

- Repeating a specific keyword so often that keyword stuffing appears obvious to algorithms is another of the critical mistakes, harming user experience, engagement rates, and overall page seo.

- Ignoring context, headings, and related keywords while focusing only on raw keyword density is a subtle set of mistakes that reduces readability and makes it harder for readers and search engines to understand content intent.

- Copying outdated averages from competitors without regular review is a strategic seo mistake that can lock your site into poor keyword usage patterns instead of adapting to google updates and new queries.

Best practices for managing keyword density and seo

The best approach is to treat keyword density as one tactical metric within a broader seo strategy and to follow a small set of proven best practices that keep both search engines and readers satisfied.

Below are concise recommendations that reflect good practice for managing keyword density without over-optimization.

- Start by using Labrika's keyword density checker and keyword density analysis within the content optimizer to measure current density for each key phrase and compare your page with competitor information.

- Then use the keyword density tool to calculate keyword density for your draft text, check whether the results fall within the recommended percentage range, and adjust wording so the keyword appears naturally.

- As you create content, ensure every target keyword supports the page topic, fits the context of surrounding sentences, and adds real value for the audience rather than being forced into the article.

- Regularly review keyword performance with Labrika's seo tools and other reporting tools keyword, update pages when competitor strategies change, and measure how adjustments influence traffic, rankings, and conversions.

Applying this approach consistently across your site helps build authority, improve visibility, and support long-term seo success.

How to integrate Labrika’s tools into your keyword workflow

To turn these recommendations into repeatable results, structure your work with Labrika around four stages: researching keywords, drafting content, auditing with tools, and updating pages.

Step 1: Research and group your keywords

Begin with thorough keyword research to identify the main target keyword, supporting keywords, and related keywords that match your topic and audience queries.

Group keywords by search intent and page type so that each landing page can focus on a clear phrase set instead of mixing unrelated keywords in one piece of content.

This structure helps search engines understand content themes, keeps keyword usage natural, and makes later optimization decisions easier to manage.

Step 2: Draft content before using tools

Write a full draft of your content that answers key questions, uses headings to divide sections, and delivers quality content that is easy to read on any device.

Include the primary keyword and a few secondary keywords where they fit naturally in the title, meta description, introduction, body text, and conclusion.

Aim for a word count that lets you cover the topic in depth without padding, and avoid forcing keywords into every sentence just to hit a perceived ideal percentage.

This approach ensures your article remains focused on user experience while still giving Labrika enough data to evaluate keyword signals in the content.

Step 3: Audit with Labrika’s keyword tools

Run the content optimizer and review the suggestions from Labrika’s tools keyword reports, which compare your pages with competitors and highlight where keywords or headings may need adjustment.

Within this interface, Labrika’s keyword density tool helps you calculate keyword density for each url based on competitor examples and total number words, instead of guessing.

This tool consolidates complex competitor data into clear ranges that are easy to act on.

Use this tool to spot pages where the number keyword mentions, keyword distribution between blocks, or missing related terms suggest that rewriting sections will give a better balance.

If Labrika reports that some phrases have no common density interval, revise the page targeting so each keyword combination maps to the right landing page.

Step 4: Implement changes and monitor results

After applying the recommendations, update your content, internal links, and meta tags, then re-crawl the site with Labrika to check how the changes affect rankings, clicks, and conversions.

Track performance over time in your analytics tools and correlate improvements with specific keyword and content changes rather than relying on one-off checks.

This feedback loop keeps your seo aligned with user intent, guides ongoing content optimization, and helps your team make good decisions about which tool or pages to expand, merge, or retire.

Updated on January 20, 2026.